AI, machine learning and the race to improve cybersecurity

The majority of information security teams’ cybersecurity analysts are overwhelmed today analysing security logs, thwarting breach attempts, investigating potential fraud incidents and more. 69% of senior executives believe AI and machine learning are necessary to respond to cyberattacks according to the Capgemini study, Reinventing Cybersecurity with Artificial Intelligence.

The following graphic compares the percentage of organisations by industry who are relying on AI to improve their cybersecurity. 80% of telecommunications executives believe their organisation would not be able to respond to cyberattacks without AI, with the average being 69% of all enterprises across seven industries.

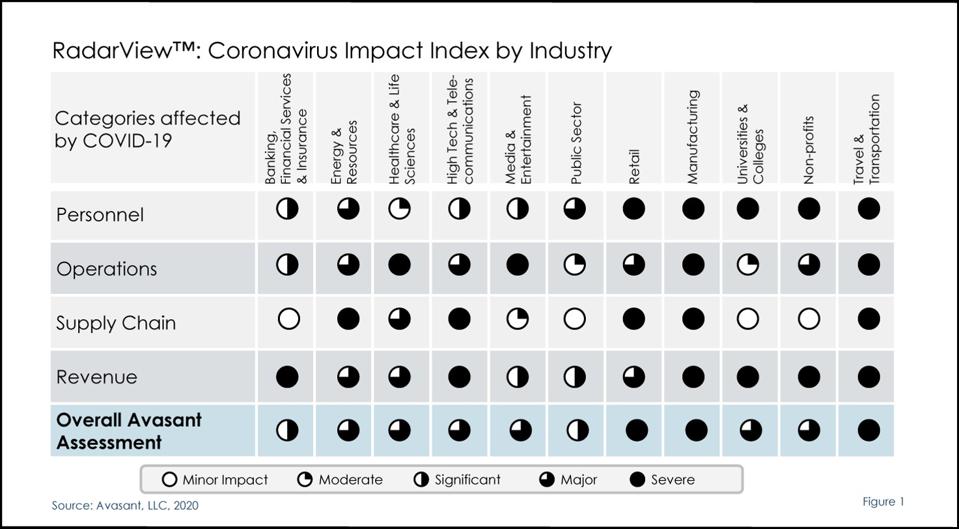

The bottom line is all organisations have an urgent need to improve endpoint security and resilience, protect privileged access credentials, reduce fraudulent transactions, and secure every mobile device applying Zero Trust principles. Many are relying on AI and machine learning to determine if login and resource requests are legitimate or not based on past behavioral and system use patterns.

Several of the top 10 companies to watch take into account a diverse series of indicators to determine if a login attempt, transaction, or system resource request is legitimate or not. They’re able to assign a single score to a specific event and predict if it’s legitimate or not. Kount’s Omniscore is an example of how AI and ML are providing fraud analysts with insights needed to reduce false positives and improve customer buying experiences while thwarting fraud.

The following are the top ten cybersecurity companies to watch in 2020:

Absolute

Absolute serves as the industry benchmark for endpoint resilience, visibility and control. Embedded in over a half-billion devices, the company enables more than 12,000 customers with self-healing endpoint security, always-connected visibility into their devices, data, users, and applications – whether endpoints are on or off the corporate network – and the ultimate level of control and confidence required for the modern enterprise.

To thwart attackers, organisations continue to layer on security controls — Gartner estimates that more than $174B will be spent on security by 2022, and of that approximately $50B will be dedicated protecting the endpoint. Absolute’s Endpoint Security Trends Report finds that in spite of the astronomical investments being made, 100 percent of endpoint controls eventually fail and more than one in three endpoints are unprotected at any given time.

All of this has IT and security administrators grappling with increasing complexity and risk levels, while also facing mounting pressure to ensure endpoint controls maintain integrity, availability and functionality at all times, and deliver their intended value.

Organisations need complete visibility and real-time insights in order to pinpoint the dark endpoints, identify what’s broken and where gaps exist, as well as respond and take action quickly. Absolute mitigates this universal law of security decay and empowers organisations to build an enterprise security approach that is intelligent, adaptive and self-healing. Rather than perpetuating a false sense of security, Absolute provides a single source of truth and the diamond image of resilience for endpoints.

Centrify

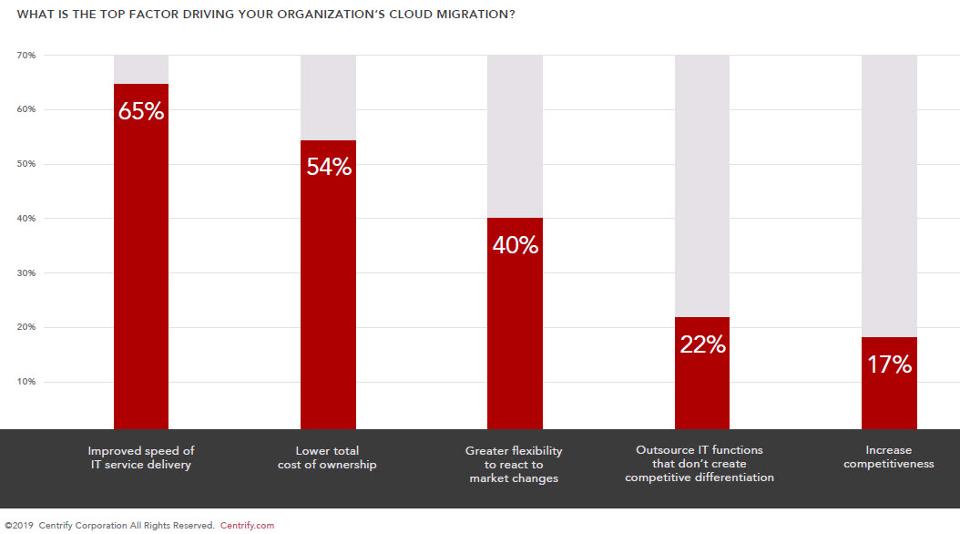

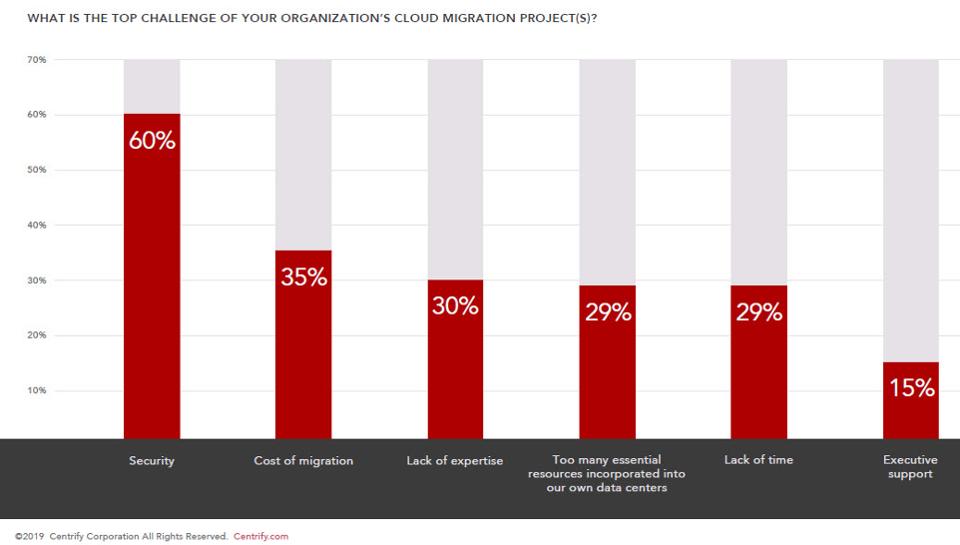

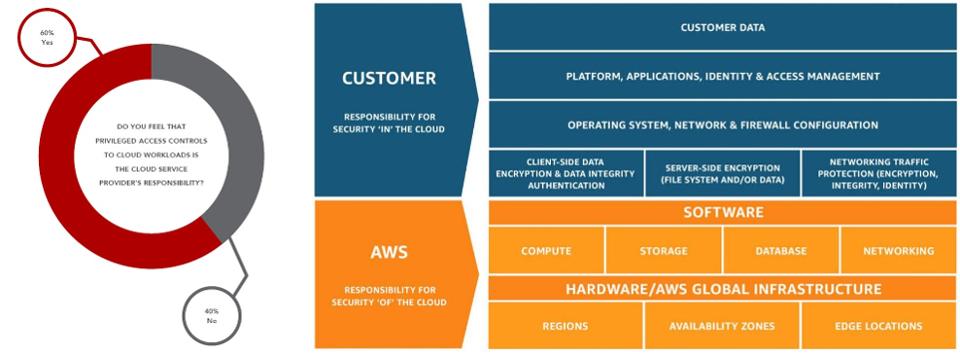

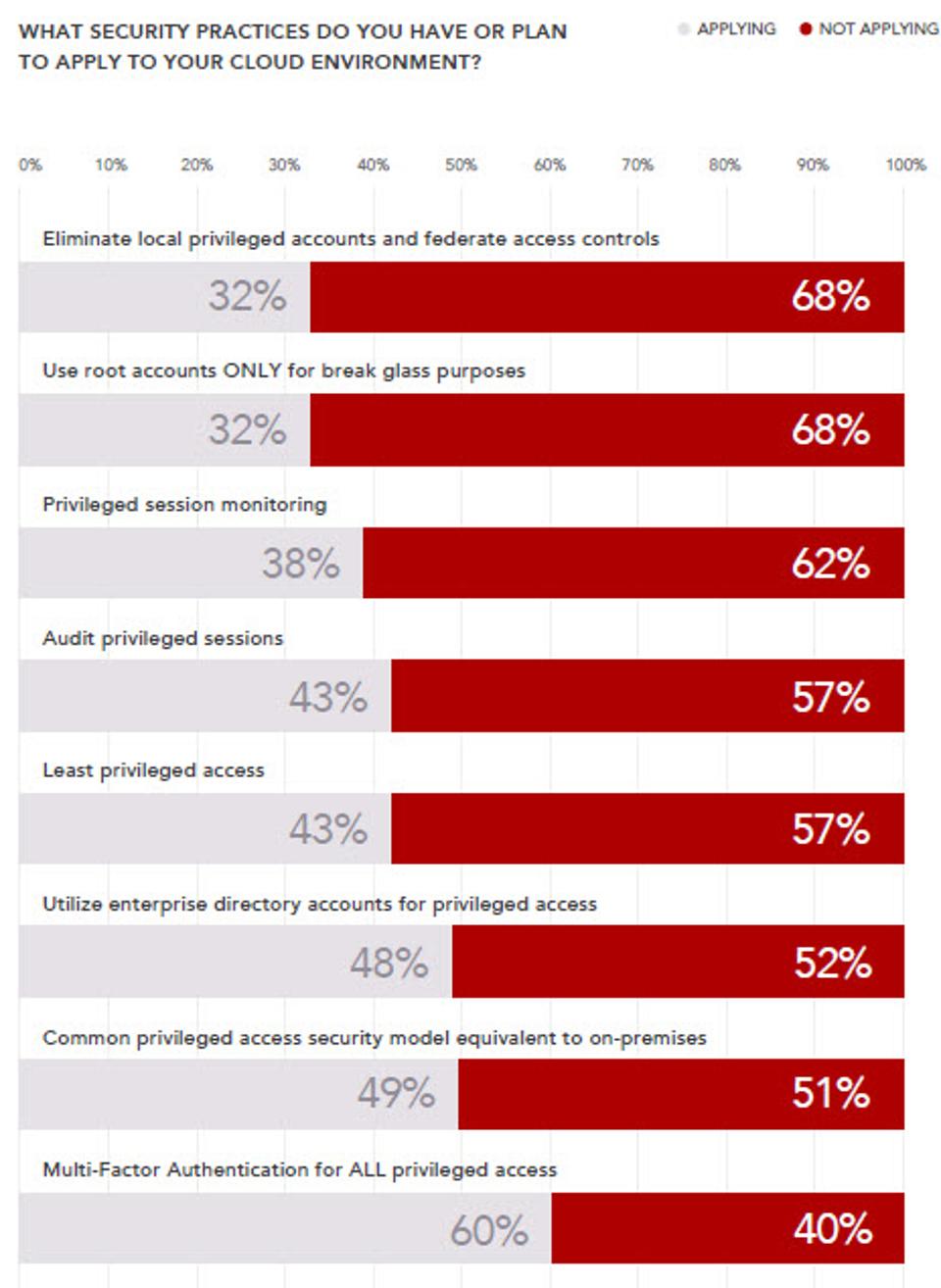

Centrify is redefining the legacy approach to Privileged Access Management (PAM) with an Identity-Centric approach based on Zero Trust principles.

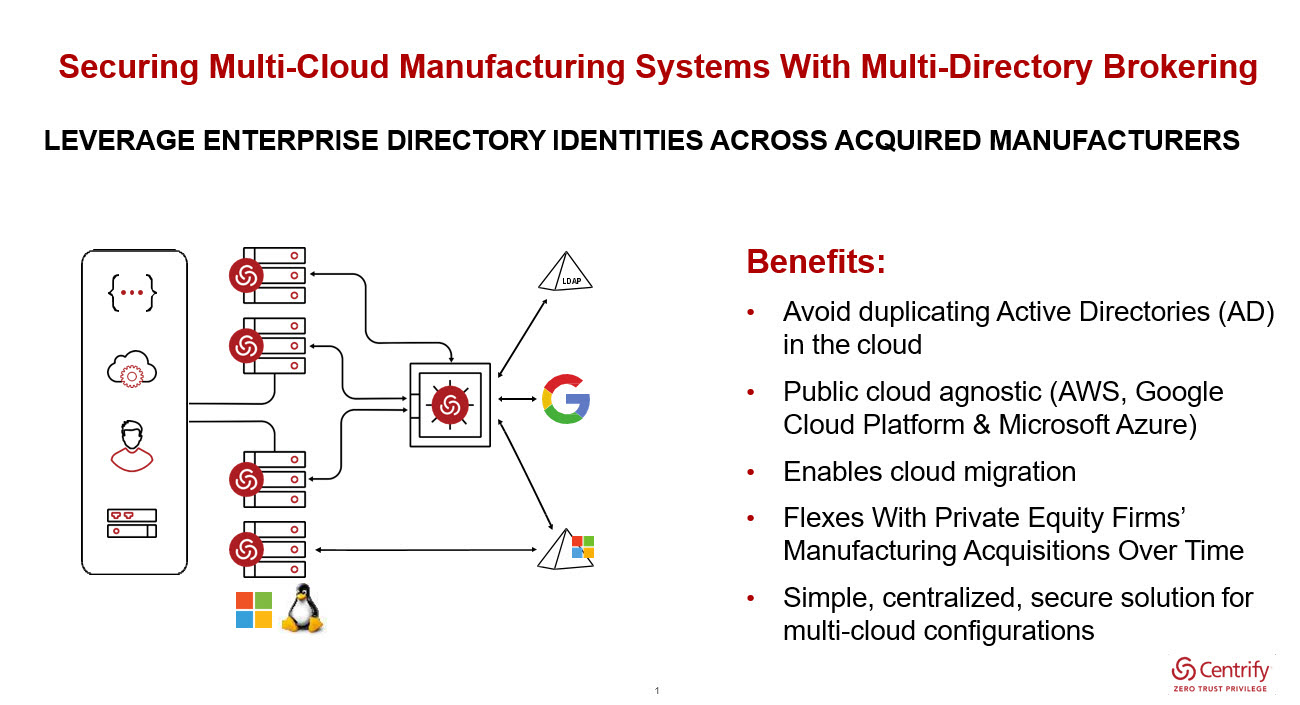

Centrify’s 15-year history began in Active Directory (AD) bridging, and it was the first vendor to join UNIX and Linux systems with Active Directory, allowing for easy management of privileged identities across a heterogeneous environment. It then extended these capabilities to systems being hosted in IaaS environments like AWS and Microsoft Azure, and offered the industry’s first PAM-as-a-Service, which continues to be the only offering in the market with a true multi-tenant, cloud architecture.

Applying its deep expertise in infrastructure allowed Centrify to redefine the legacy approach to PAM and introduce a server’s capability to self-defend against cyber threats across the ever-expanding modern enterprise infrastructure.

Centrify Identity-Centric PAM establishes a root of trust for critical enterprise resources, and then grants least privilege access by verifying who is requesting access, the context of the request, and the risk of the access environment. By implementing least privilege access, Centrify minimises the attack surface, improves audit and compliance visibility, and reduces risk, complexity, and costs for the modern, hybrid enterprise. Over half of the Fortune 100, the world’s largest financial institutions, intelligence agencies, and critical infrastructure companies, all trust Centrify to stop the leading cause of breaches – privileged credential abuse.

Research firm Gartner predicts that by 2021, approximately 75% of large enterprises will utilise privileged access management products, up from approximately 50% in 2018 in their Forecast Analysis: Information Security and Risk Management, Worldwide, 4Q18 Update published March 29, 2019 (client access reqd). This is not surprising, considering that according to an estimate by Forrester Research, 80% of today’s breaches are caused by weak, default, stolen, or otherwise compromised privileged credentials.

Deep Instinct

Deep Instinct applies artificial intelligence’s deep learning to cybersecurity. Leveraging deep learning’s predictive capabilities, Deep Instinct’s on-device solution protects against zero-day threats and APT attacks with unmatched accuracy. Deep Instinct safeguards the enterprise’s endpoints and/or any mobile devices against any threat, on any infrastructure, whether or not connected to the network or to the Internet.

By applying deep learning technology to cybersecurity, enterprises can now gain unmatched protection against unknown and evasive cyber-attacks from any source. Deep Instinct brings a completely new approach to cybersecurity enabling cyber-attacks to be identified and blocked in real-time before any harm can occur. Deep Instinct USA is headquartered in San Francisco, CA and Deep Instinct Israel is headquartered in Tel Aviv, Israel.

Infoblox

Infoblox empowers organisations to bring next-level simplicity, security, reliability and automation to traditional networks and digital transformations, such as SD-WAN, hybrid cloud and IoT. Combining next-level simplicity, security, reliability, and automation, Infoblox can cut manual tasks by 70% and make organisations’ threat analysts 3x more productive.

While their history is in DDI devices, they are succeeding in providing DDI and network security services on an as-a-service (-aaS) basis. Their BloxOne DDI application, built on their BloxOne cloud-native platform, helps enable IT professionals to manage their networks, whether they’re based on on-prem, cloud-based, or hybrid architectures. BloxOne Threat Defense application leverages the data provided by DDI to monitor network traffic, proactively identify threats, and quickly inform security systems and network managers of breaches, working with the existing security stack to identify and mitigate security threats quickly, automatically, and more efficiently. The BloxOne platform provides a secure, integrated platform for centralising the management of identity data and services across the network. A recognised industry leader, Infoblox has a 52% market share in the DDI networking market comprised of 8,000 customers, including 59% of the Fortune 1000 and 58% of the Forbes 2000.

Kount

Kount’s award-winning, AI-driven fraud prevention empowers digital businesses, online merchants, and payment service providers around the world to protect against payments fraud, new account creation fraud, and account takeover. With Kount, businesses approve more good orders, uncover new revenue streams, improve customer experience, and dramatically improve their bottom line all while minimising fraud management cost and losses. Through Kount’s global network and proprietary technologies in AI and machine learning, combined with flexible policy management, companies frustrate online criminals and bad actors driving them away from their site, their marketplace, and off their network. Kount’s continuously adaptive platform provides certainty for businesses at every digital interaction. Kount’s advances in both proprietary techniques and patented technology include mobile fraud detection, advanced artificial intelligence, multi-layer device fingerprinting, IP proxy detection and geo-location, transaction and custom scoring, global order linking, business intelligence reporting, comprehensive order management, as well as professional and managed services. Kount protects over 6,500 brands today.

Mimecast

Mimecast improves the way companies manage confidential, mission-critical business communication and data. The company’s mission is to reduce the risks users face from email, and support in reducing the cost and complexity of protecting users by moving the workload to the cloud.

The company develops proprietary cloud architecture to deliver comprehensive email security, service continuity, and archiving in a single subscription service. Its goal is to make it easier for people to protect a business in today’s fast-changing security and risk environment. The company expanded its technology portfolio in 2019 through a pair of acquisitions, buying data migration technology provider Simply Migrate to help customers and prospects move to the cloud more quickly, reliably, and inexpensively. Mimecast also purchased email security startup DMARC Analyser to reduce the time, effort, and cost associated with stopping domain spoofing attacks.

Mimecast acquired Segasec earlier this month, a leading provider of digital threat protection. With the acquisition of Segasec, Mimecast can provide brand exploit protection, using machine learning to identify potential hackers at the earliest stages of an attack. The solution also is engineered to provide a way to actively monitor, manage, block, and take down phishing scams or impersonation attempts on the Web.

MobileIron

A long-time leader in mobile management solutions, MobileIron is widely recognised by Chief Information Security Officers, CIOs and senior management teams as the de facto standard for unified endpoint management (UEM), mobile application management (MAM), BYOD security, and zero sign-on (ZSO).

The company’s UEM platform is strengthened by MobileIron Threat Defense and MobileIron’s Access solution, which allows for zero sign-on authentication. Forrester observes in their latest Wave on Zero Trust eXtended Ecosystem Platform Providers, Q4 2019 that “MobileIron’s recently released authenticator, which enables passwordless authentication to cloud services, is a must for future-state Zero Trust enterprises and speaks to its innovation in this space.”

The Wave also illustrates that MobileIron is the most noteworthy vendor as their approach to Zero Trust begins with the device and scales across mobile infrastructures. MobileIron’s product suite also includes a federated policy engine that enables administrators to control and better command the myriad of devices and endpoints that enterprises rely on today. Forrester sees MobileIron as having excellent integration at the platform level, a key determinant of how effective they will be in providing support to enterprises pursuing Zero Trust Security strategies in the future.

One Identity

One Identity is differentiating its Identity Manager identity analytics and risk scoring capabilities with greater integration via its connected system modules. The goal of these modules is to provide customers with more flexibility in defining reports that include application-specific content. Identity Manager also has over 30 direct provisioning connectors included in the base package, with good platform coverage, including strong Microsoft and Office 365 support.

Additional premium connectors are charged separately. One Identity also has a separate cloud-architected SaaS solution called One Identity Starling. One of Starling’s greatest benefits is its design that allows for it to be used not only by Identity Manager clients, but also by clients of other IGA solutions as a simplified approach to obtain SaaS-based identity analytics, risk intelligence, and cloud provisioning.

One Identity and its approach is trusted by customers worldwide, where more than 7,500 organisations worldwide depend on One Identity solutions to manage more than 125 million identities, enhancing their agility and efficiency while securing access to their systems and data – on-prem, cloud, or hybrid.

SECURITI.ai

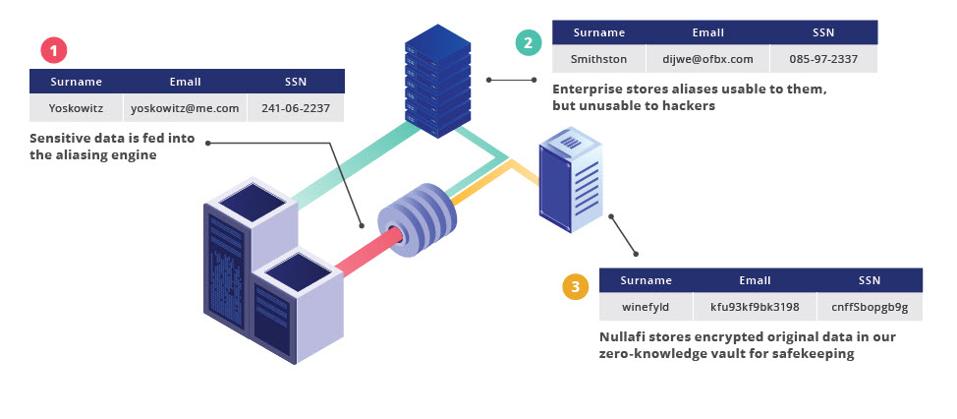

SECURITI.ai is the leader in AI-Powered PrivacyOps, that helps automate all major functions needed for privacy compliance in one place. It enables enterprises to give rights to people on their data, be responsible custodians of people’s data, comply with global privacy regulations like CCPA, and bolster their brands.

The AI-Powered PrivacyOps platform is a full-stack solution that operationalises and simplifies privacy compliance using robotic automation and a natural language interface. These include a Personal Data Graph Builder, Robotic Automation for Data Subject Requests, Secure Data Request Portal, Consent Lifecycle Manager, Third-Party Privacy Assessment, Third-Party Privacy Ratings, Privacy Assessment Automation and Breach Management. SECURITI.ai is also featured in the Consent Management section of Bessemer’s Data Privacy Stack shown below and available in Bessemer Venture Partner’s recent publication How data privacy engineering will prevent future data oil spills (10 pp., PDF, no opt-in).

Transmit Security

The Transmit Security Platform provides a solution for managing identity across applications while maintaining security and usability. As criminal threats evolve, online authentication has become reactive and less effective. Many organisations have taken on multiple point solutions to try to stay ahead, deploying new authenticators, risk engines, and fraud tools. In the process, the customer experience has suffered.

With an increasingly complex environment, many enterprises struggle with the ability to rapidly innovate to provide customers with an omnichannel experience that enables them to stay ahead of emerging threats.

Interested in hearing industry leaders discuss subjects like this and sharing their experiences and use-cases? Attend the Cyber Security & Cloud Expo World Series with upcoming events in Silicon Valley, London and Amsterdam to learn more.

Interested in hearing industry leaders discuss subjects like this and sharing their experiences and use-cases? Attend the Cyber Security & Cloud Expo World Series with upcoming events in Silicon Valley, London and Amsterdam to learn more.

Interested in hearing industry leaders discuss subjects like this and sharing their experiences and use-cases? Attend the Cyber Security & Cloud Expo World Series with upcoming events in Silicon Valley, London and Amsterdam to learn more.

Interested in hearing industry leaders discuss subjects like this and sharing their experiences and use-cases? Attend the Cyber Security & Cloud Expo World Series with upcoming events in Silicon Valley, London and Amsterdam to learn more.